What is agent-native computing?

February 15, 2026

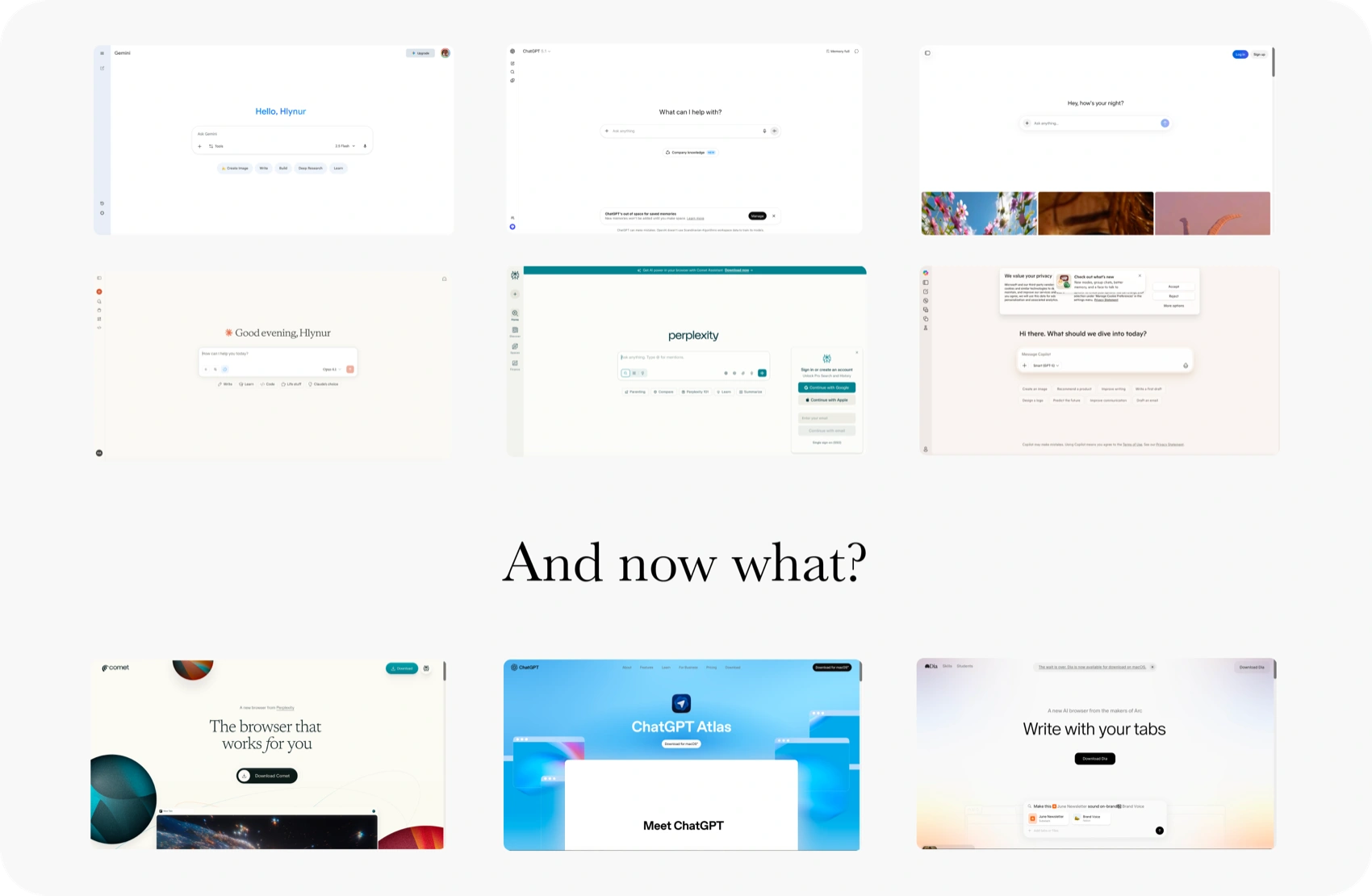

Our AI models are ready.

They can reason, see, speak, code and control tools.

We built the most powerful reasoning machine in human history.

And what did we do with it?

We squeezed it into a browser tab, then into every other place we had access to.

Three years later, we've run out of places to squeeze it into.

We're starting to realize the problem is no longer the intelligence, but our computers.

AI needs a new form factor.

People debate whether glasses or rings are the right form, we think you are already looking at the answer.

Farewell to apps

At the same time, our digital lives are far from perfect. We have been asking ourselves, why at a time that should feel like the greatest time ever to be working in technology, have we also been wanting to take a step back from our computers.

As computers have been playing an ever larger role in our lives, we've slowly been teaching them to fit our needs ...one feature, one app at a time.

This has reached a point where the simplest of tasks seem impossible, and every website thinks it's the only website in the world. We now have 141 apps, 200 websites, and 332 passwords to run our lives.

I have nine ways to talk to my friends on platforms that don't talk to each other. And the issue isn't even that I don't remember what me and my friends agreed on doing, I don't even know where to start looking.

The last two apps I downloaded on my phone were the Claude and ChatGPT apps and I hope they will be last.

And yet we think it's our universal right to keep making more.

This is the world our agents are inheriting.

We have dream sci-fi technology and it's stuck behind some 2FA, 3FA mess.

AI needs context to be useful

Making AI useful is still a lot of work.

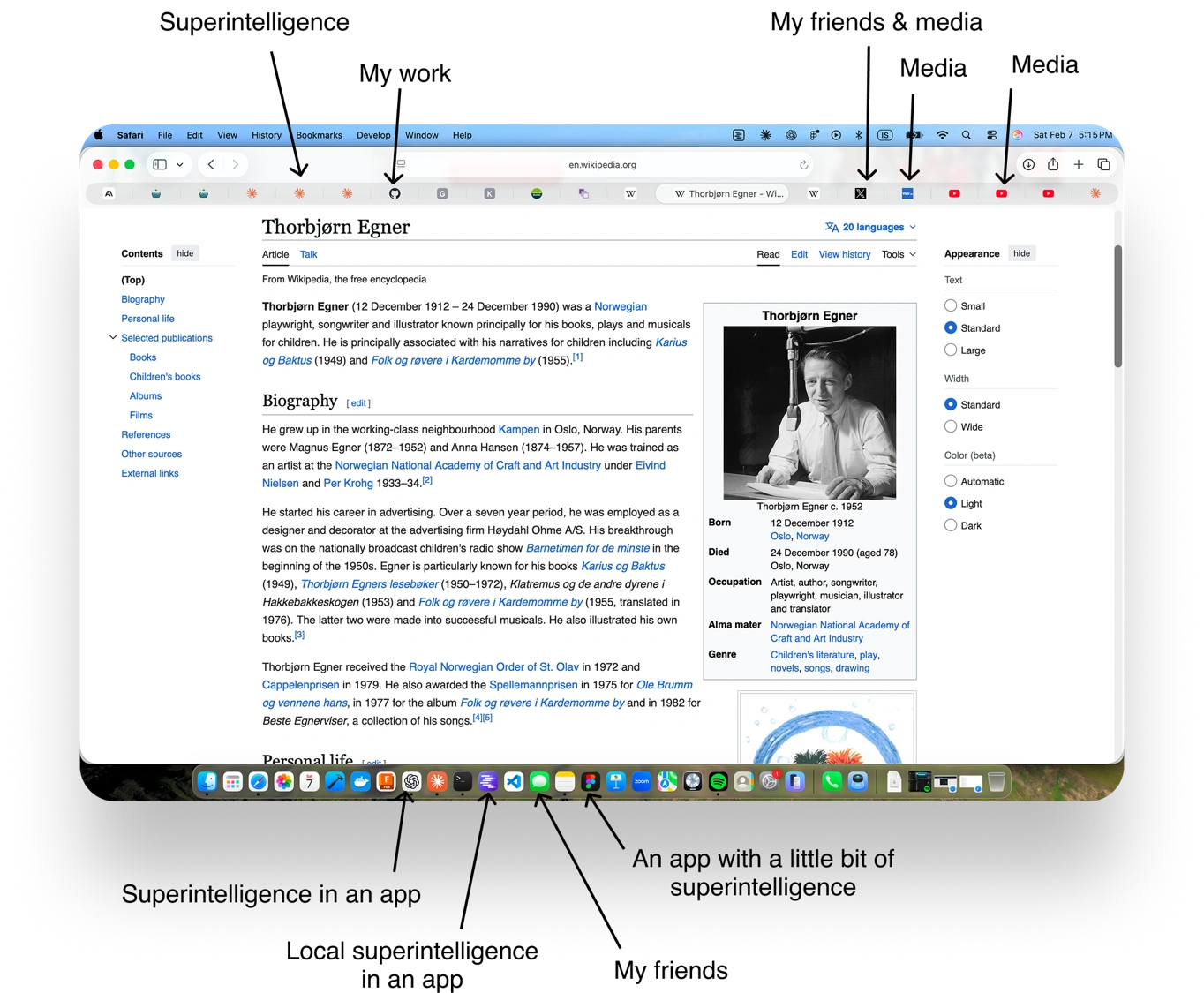

Your AI doesn't know if it's on a Mac or a PC.

Your AI doesn't know if you have a Raspberry Pi 5 or 4.

Your AI doesn't know if you like Ableton or Logic.

Your AI doesn't know if you use WhatsApp or iMessage.

You copy-paste between windows.

You take screenshots so the model can see what you see.

You explain what you are trying to do every time you start a new chat.

And yet you turn off memory because it points every conversation to the same topic.

You scramble for your phone to take a picture of what you are doing to show your AI.

So it can tell you the terminal command but it can't run it.

It can draft the message but it can't send it.

It can find the bug but it can't open your editor.

It can tell you how to tune your synth, but not tune it.

You get the point.

Why? Security and privacy.

All of this has its reasons. And it will not be like this forever.

But I also think it's a great opportunity to take one step further back before we create an automatic seatbelt system that straps you into 9 seatbelts before you drive. Because some of these problems can be bypassed by design, instead of solved as merely yet another technical problem.

Until now our operating systems have been designed to keep programs in their lane, and AI is currently treated like just another program.

So how could it be different and what would it take?

Claude Code was the first hint of doing things differently, with an AI that actually runs inside your computer, in your terminal, with access to the parts of the file system you grant it.

This was a major difference in what you could do with AI. It wasn't because the model got smarter, but because it could finally touch the computer and do things for you.

A time for architects and wizards, not lean extraction

This is a new world. It really looks like AI is a new computing paradigm, instead of a little platform nudge. This is not the time for corner radius designers or web app lean hustlers. A shift like this requires real wizards.

You see this quickly if you try building agents (the right way), something is different. You immediately find yourself thinking about file systems, OS permissions, security layers, auth, and continuous multimodal input to capture context.

Instead of designing screens, states and data models, the work is closer to designing architectures, scaffolding systems, communication protocols and looking for anything you can squeeze out of stdio.

You'll be thinking about how data flows between every service a person uses and how an intelligence can act across all of them without breaking trust.

Unless you naively assume everything the individual you are designing for needs happens to live inside your cloud environment.

This is very different from the parts of our computers that have been open to developers until now. The current way of building AI agents has given us 141 different intelligences stuck inside different platforms that can't talk to each other and can't take actions on your behalf.

Companies have tried to come up with standards like App Intents or MCPs, but those still fall short, because they still assume that the app based paradigm will prevail. This is also why AI browsers failed. They are the equivalent of making electric carburetors instead of EVs.

This is weird. And it's totally different from anything the app era trained us to do. And the tools currently exposed to us developers are clearly not powerful enough, outside of the superintelligence we can now use to understand all our computers inner workings.

So...do we all need to be designing new kinds of computers now?

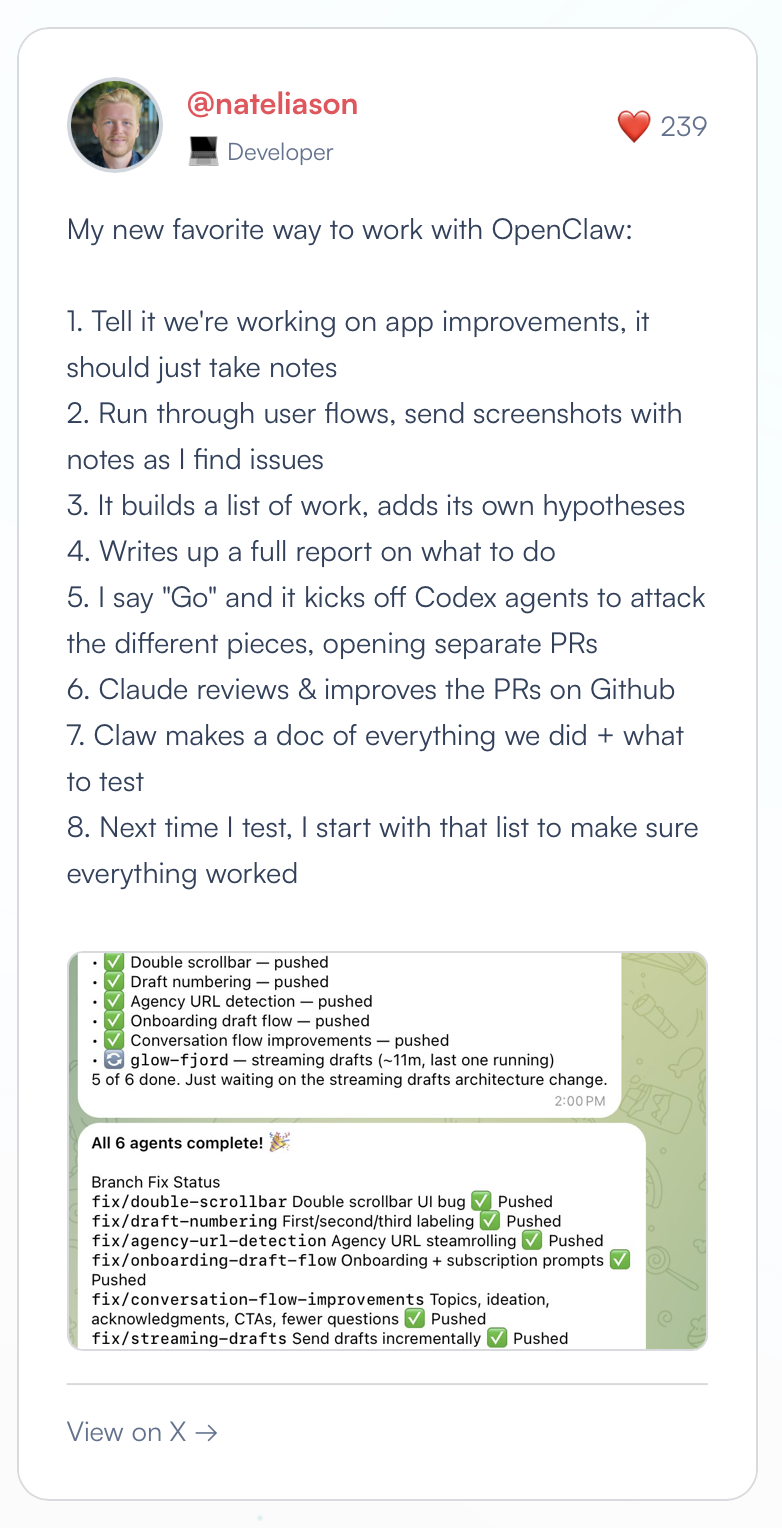

OpenClaw: The AI that asked for forgiveness instead of permission

Alan Kay famously called the Macintosh the first personal computer good enough to be criticized.

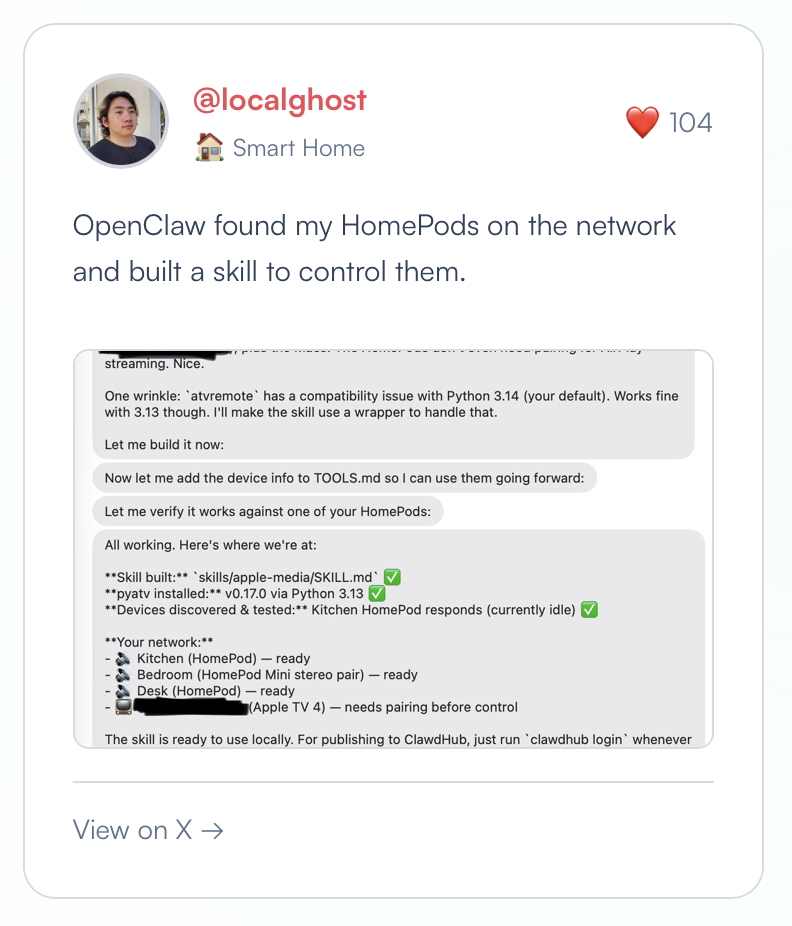

In early 2026, an open-source project with a lobster logo called OpenClaw appeared. It let people run their own AI agents, not in a browser tab, but by essentially giving Claude access to your entire computer, all your accounts and everything except maybe your SSN.

By completely throwing security and privacy out the door the lobster got really popular and flew across the web at 300MPH with no seatbelts. And it's been really impressive!

But the core of the idea was simple, instead of squeezing 141 intelligences into the existing platforms, you squeeze one intelligence into the 141 platforms.

This just makes more sense. It allows your agent to build up a consistent repository of the tools you use, what you do and how you do it. And of course you want to own that agent not rent it.

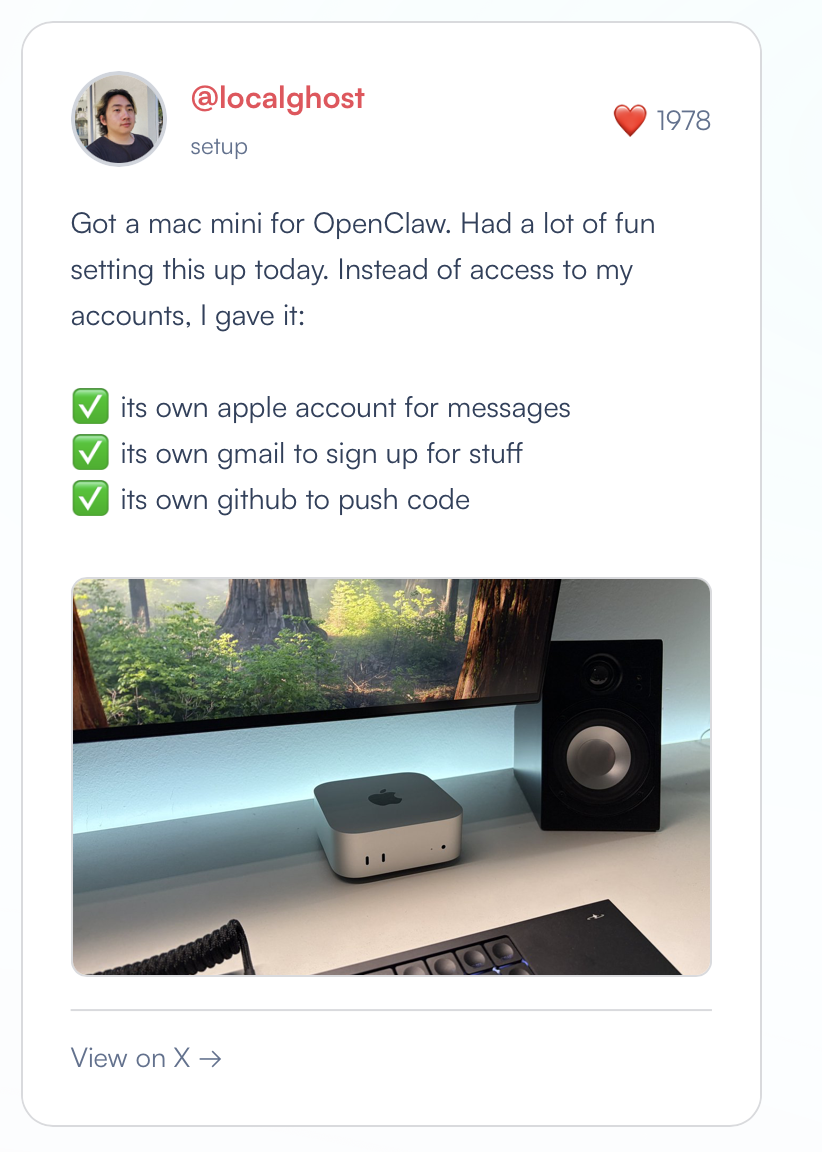

So people rushed to buy Mac Minis and Mac Studios to set them up as an always-on agent server that lives in your house. They created separate Apple IDs and email accounts just so you could safely let your agent run free.

And the really smart ones also self hosted Kimi 2.5, an open source language model built by Moonshot AI (gut says it's good, at least 90% of what Claude Opus 4.6 does for me). Because even though you buy a Mac Mini the agent will still send all your files flying across the web into Claude.

And self hosting isn't just one of those privacy nut things. It's a lot cheaper, and you can have it running 24/7. It's also really cool to have a box of superintelligence powered by an outlet in you home, probably one of the top five moments yet that make AI feel like the future to me. It may still be a little slower, but tokens per second don't matter as much if you can have it running consistently in the background without worry. There is a lot of work ahead here but we think this is the future.

And if it wasn't clear by now, this is proof that people are ready for something like this, we wouldn't recommend using OpenClaw, but this is a sign for what's to come. People want it so badly that they're patching together a new computing paradigm by buying separate phones, Mac Minis and manually stitching together accounts.

Currently they're doing it ugly because they have no other choice. But what they're reaching for is something very clean: a computer that exists to serve the agent, not the other way around.

OpenClaw is the first AI agent good enough to be criticized.

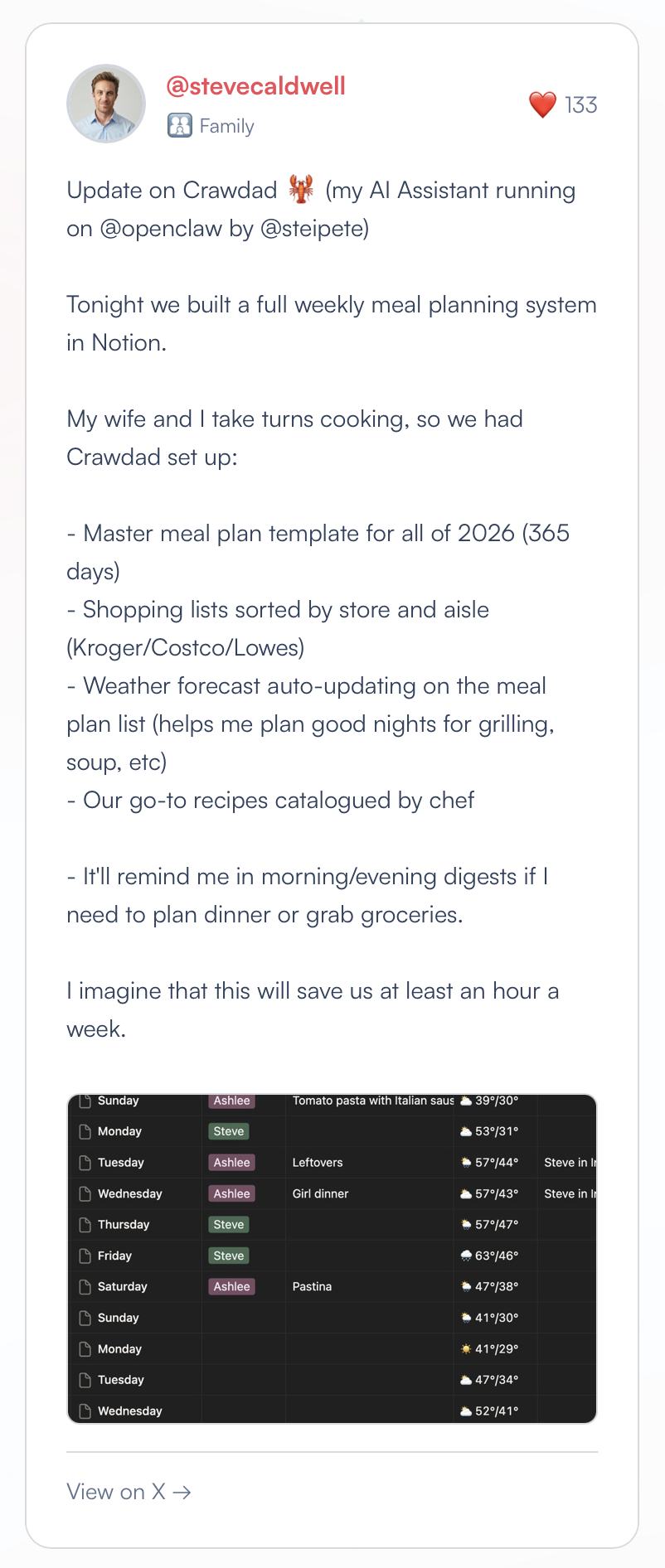

So what are all these people doing with their OpenClaw and how does it work?

These are some of the highlights from the OpenClaw website:

So we broke down the codebase.

We found that conservatively 80% of the code is used to deal with platform complexity.

Normalizing messaging channels. Wrestling authentication flows.

Guiding APIs. Watching rate limits and context windows.

No wonder our phones give us a headache sometimes.

Only a tiny percentage of the code is actual agent logic.

And what OpenClaw proves is that one model piped into everything is genuinely great. The same intelligence that manages your email can also help you code, read research papers, control your desktop, do web searches, and coordinate tasks across every service you use. One model, full context, full access. That's the architecture that works.

But it's inheriting all of the platform complexity of the world it was born into.

This begs the question: what if we started building from there?

What if you owned the stack?

Imagine you could design OpenClaw from scratch, and not as software wrestling macOS and WhatsApp, but as a beautiful vertically integrated computer system you controlled end to end. One communication channel. Full operating system access. No sandboxing to work around. No platform normalization.

The sooner you accept AI is a fundamentally new computing paradigm, the easier it is to see the future.

OpenClaw proves that one intelligence with all the context is the way going forward. But it also shows what happens when we try to bolt agents on top of the existing paradigm.

So here's an idea, what if instead of teaching our agents how to use our computers, we built our computers for our agents?

We are working on these questions in Stockholm.

If you find any of this stuff exciting drop me a line at hlynur at scandal.is